Spotify API ELT

End-to-end ELT pipeline for Spotify data with orchestration, testing & CI/CD

0.0

0 reviews•

Apache Airflow·

Soda·

Python·

PostgreSQL

This project demonstrates a production-ready end-to-end ELT pipeline built on top of the Spotify API.The pipeline simulates a record label tracking its artists' catalogue and music performance. Data i...

About this project

This project demonstrates a production-ready end-to-end ELT pipeline built on top of the Spotify API.

The pipeline simulates a record label tracking its artists' catalogue and music performance.

Data is extracted from Spotify, loaded into a PostgreSQL data warehouse, and transformed into analytics-ready tables.

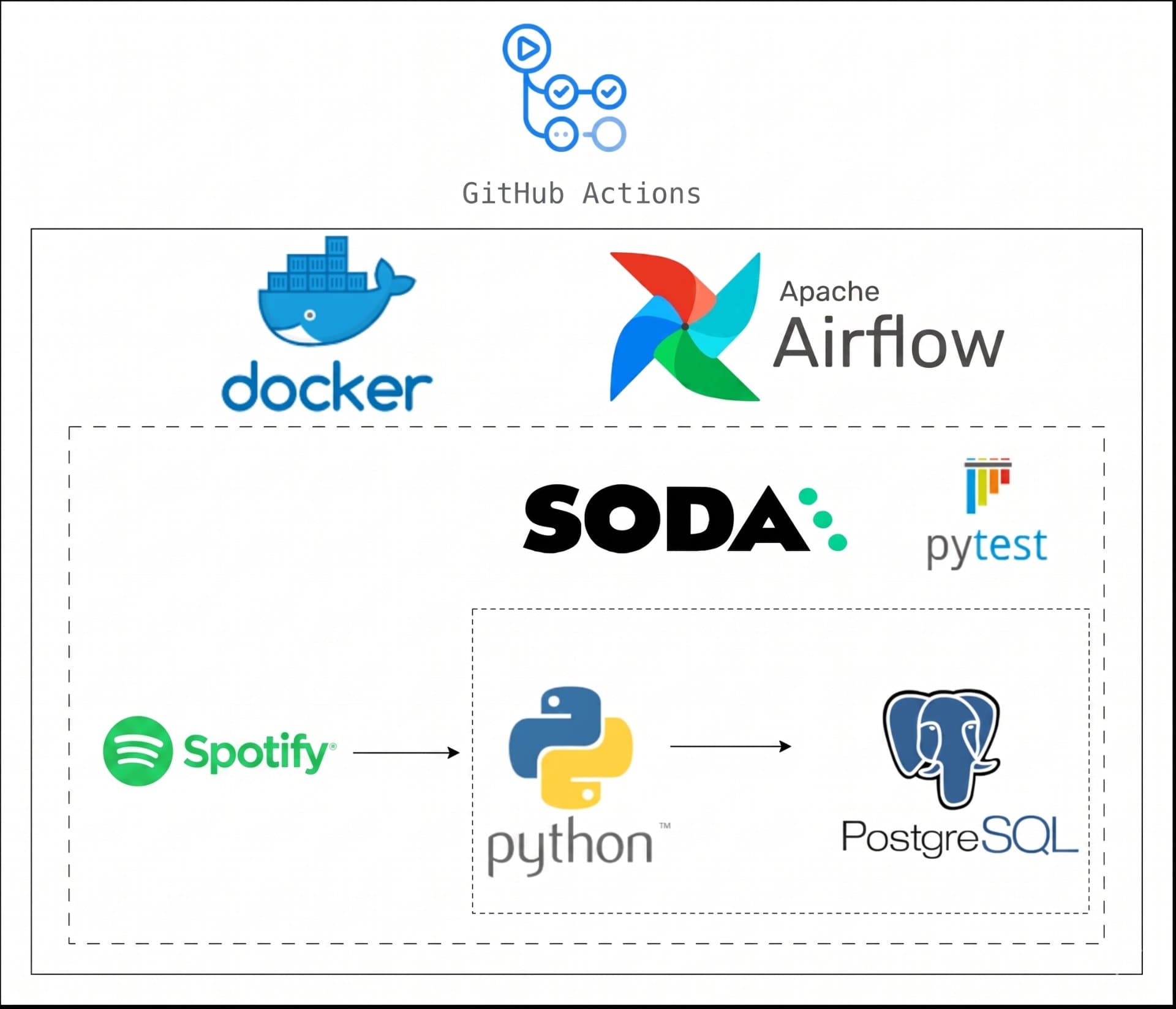

Architecture & Flow:

- Extract: Spotify API integration using Python

- Load: Raw data ingestion into PostgreSQL

- Transform: Data modeling into 3 relational tables

- Orchestration: Apache Airflow DAG for scheduling and dependency management

- Containerization: Docker-based environment

- Testing: Unit tests with pytest + data quality checks using Soda

- CI/CD: Automated workflows via GitHub Actions

Key Highlights:

- Modular and scalable pipeline design

- Clear separation between extraction, loading, and transformation layers- Production-oriented practices (testing, CI/CD, orchestration)

- Fully containerised setup for easy deployment

This project reflects real-world data engineering workflows, where data pipelines are automated, monitored, and validated to ensure reliability and scalability.

Stack:

Apache Airflow