Automated News Intelligence Pipeline

An end-to-end automated pipeline for collecting, processing, and analyzing news articles with machin

0.0

•

Apache Airflow·

Apache Spark·

PostgreSQL

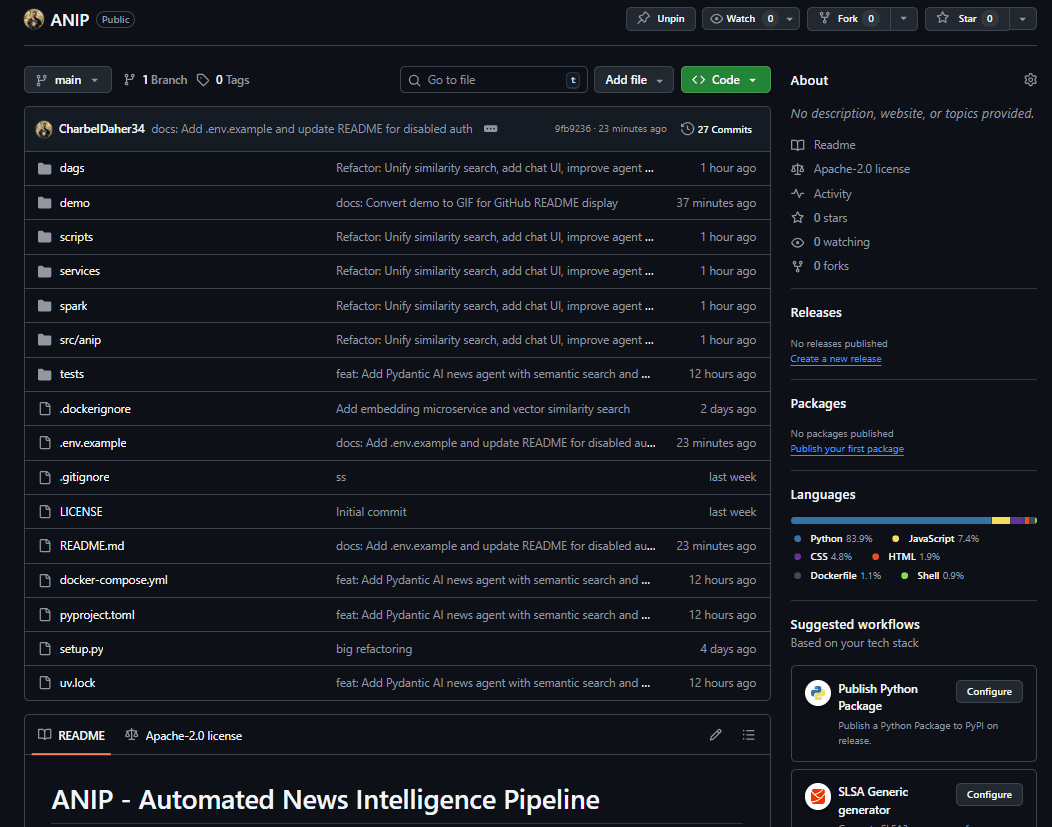

📌 Project OverviewANIP (Automated News Intelligence Pipeline) is a fully end-to-end news intelligence system that ingests articles from multiple global news APIs, processes them using distributed dat...

About this project

📌 Project Overview

ANIP (Automated News Intelligence Pipeline) is a fully end-to-end news intelligence system that ingests articles from multiple global news APIs, processes them using distributed data pipelines, enriches them with machine-learning models, and stores them in a vector-enabled database for semantic search.

The platform combines Apache Airflow, Apache Spark, MLflow, pgvector, and a FastAPI service layer to deliver a production-grade pipeline for real-time news retrieval, classification, sentiment analysis, embedding generation, and intelligent search/chat capabilities.

ANIP is designed to be modular, scalable, and easily extensible making it ideal for analytics, monitoring, and AI-powered news applications.

🔥 Key Features

Multi-source News Ingestion

Pulls structured news data from NewsAPI, NewsData, GDELT, MediaStack, and more with unified ingestion pipelines.Distributed ML Processing (Spark)

Performs article classification, sentiment analysis, and embedding generation using scalable Spark jobs.Semantic Search with pgvector

Stores embeddings in PostgreSQL with pgvector, enabling similarity queries and rich semantic retrieval.ML Model Training & Tracking (MLflow)

Full training pipeline for classifiers and embedding models with experiment tracking and version management.Airflow Orchestration

Automated DAGs for ingestion, preprocessing, ML training, embedding generation, and database updates.FastAPI Service Layer

REST API for querying articles, semantic search, statistics, and integrated conversational AI.Chat-Based News Agent

Combines external live search + internal database embeddings to answer topical questions with relevant articles.Modular Microservices Architecture

Each component (API, embeddings, MLflow server, database, Spark master/worker, Airflow) runs in isolated containers.Docker-Compose Deployment

Single command to spin up the entire analytics pipeline locally for development or demo purposes.