•

Apache Airflow·

MinIO·

Python·

PostgreSQL·

Apache Spark

Hi Folks, This is Ravi Teja Chintalapudi, I am a Data Engineer and I am building projects on local to learn and educate my virtual neighbors. So I was enrolled with Marc Lamberti's Airflow Course, and...

About this project

Hi Folks,

This is Ravi Teja Chintalapudi, I am a Data Engineer and I am building projects on local to learn and educate my virtual neighbors.

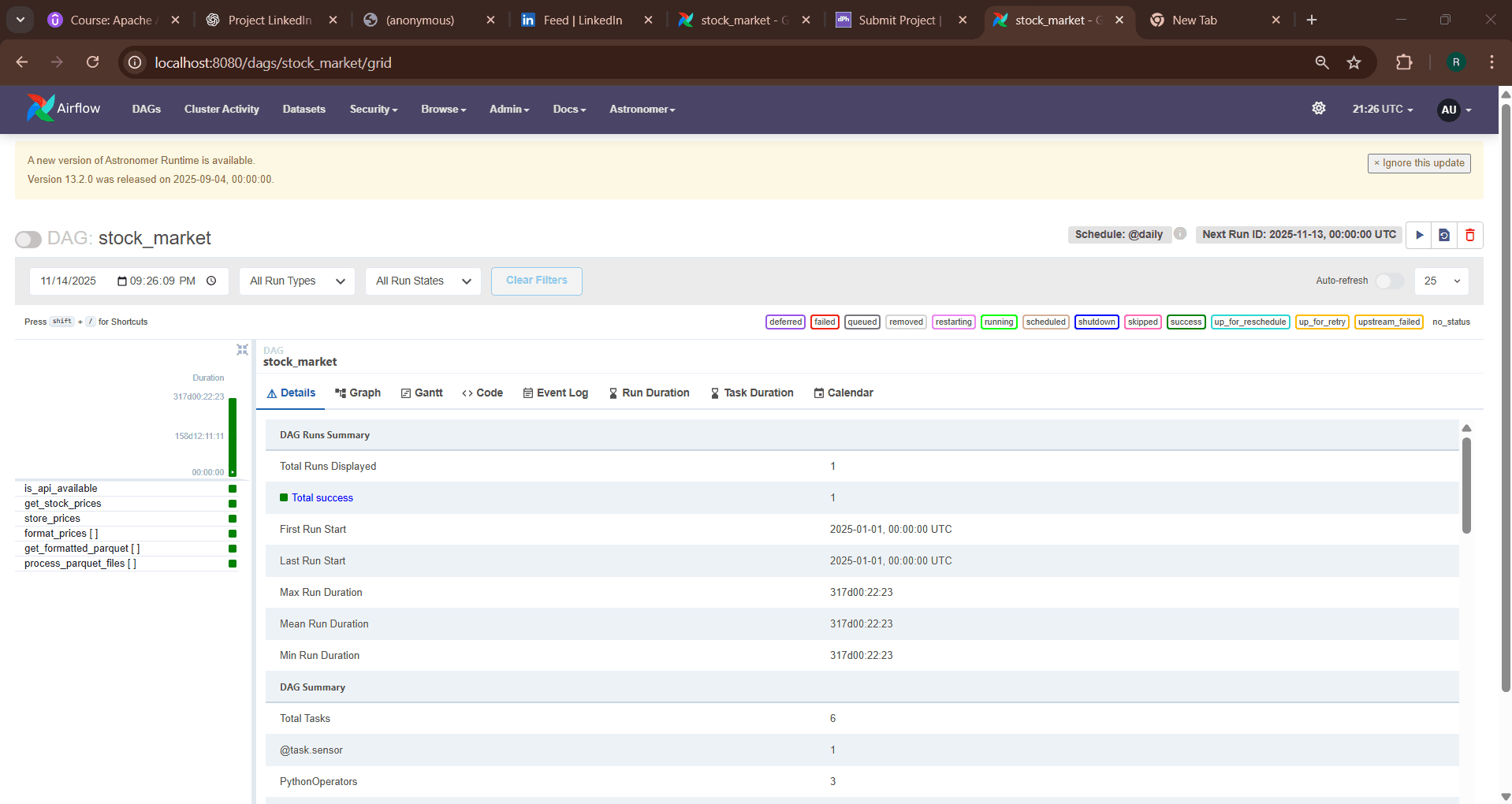

So I was enrolled with Marc Lamberti's Airflow Course, and he has build and developed an ETL pipeline which ingests one symbol NVDA stock price from the Yahoo Api, then he has used Minio to stage and pyspark to process and load the same to DW which is intend connected to Metabase. Everything was build on a network he build ndset with all the applications communicating in a container on the docker.

With this base as the data used by Marc is not sufficient I have scaled the data pipeline with the same foundation. I have used the same foundation and the network and I have ingested five symbols (NVDA, GOOG, AAPL, MSFT, AMAZ) for 90 days and I have staged them in an s3 minio as same and then I have scaled the pyspark to read each file with date on the bucket and flatten them to parquet and store them on minio. Later I have loaded them to the postgres dw and connected the metabase for visualization and have done some visualizations as per the stock KPI's.

YAHOO <--- IS_API_AVAILABLE ---> FETCH_STOCK_PRICES ---> STORE_PRICES ---> FORMAT_PRICES ---> GET_FORMATTED_PRICES ---> LOAD_TO_DW

All the code was done on python with the help of AIRFLOW which was maintained by ASTRO.

PLEASE FEEL FREE TO SCALE THIS OR SHOOT ME OUT IF YOU HAVE ANY QUERIES REGARDING THIS.

HAPPY LEARNING 😊

https://www.linkedin.com/in/ravichintalapudi/

https://www.linkedin.com/posts/ravichintalapudi_airflowetl-activity-7395172711427346432-82L7?utm_source=share&utm_medium=member_desktop&rcm=ACoAACSDZLkB8pGSZjH-1k9kq1Zwbr5CqtSYSzU

Regards