AQI-DATA-PIPELINE

End-to-end AQI data engineering pipeline using Apache Airflow, dbt, Snowflake, and AWS S3 with Bronz

0.0

0 reviews•

AWS S3·

Apache Airflow·

Snowflake·

dbt

This project is an end-to-end data engineering pipeline designed to process and analyze real-time Air Quality Index (AQI) data. It demonstrates a complete ELT workflow, starting from data ingestion to...

About this project

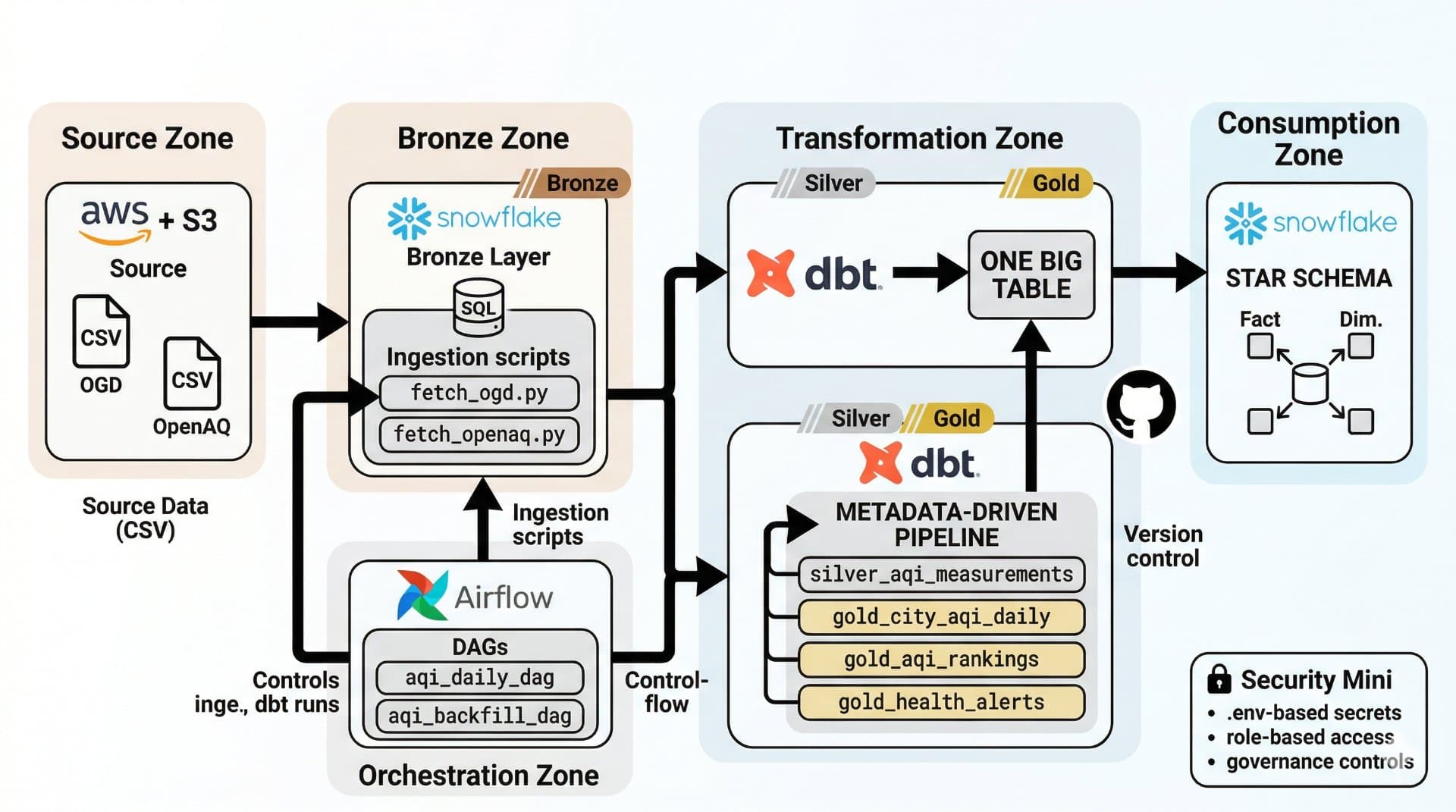

This project is an end-to-end data engineering pipeline designed to process and analyze real-time Air Quality Index (AQI) data. It demonstrates a complete ELT workflow, starting from data ingestion to visualization, using modern data engineering tools and cloud technologies.

The pipeline collects real-time AQI data from the Government of India’s Open Data API and stores raw data in AWS S3 as a data lake. The data is then loaded into Snowflake, where it is structured into a multi-layer architecture consisting of Bronze (raw), Silver (cleaned and validated), and Gold (business-ready analytics) layers. Transformations are handled using dbt, ensuring modular, scalable, and testable data models.

Apache Airflow is used for orchestration, enabling automated daily data processing as well as historical backfilling. The system supports incremental data loading, reducing processing time and avoiding full table scans. Built-in data quality tests and logging mechanisms ensure reliability and maintain data integrity throughout the pipeline.

The final processed data is visualized using Power BI dashboards, providing insights such as city-level AQI trends, pollutant breakdowns (PM2.5, PM10, NO₂, etc.), health alerts, and historical comparisons.

The project leverages Python, Docker, AWS S3, Snowflake, dbt, and Airflow, making it a scalable and production-ready solution. It highlights key data engineering concepts like pipeline automation, data warehousing, transformation modeling, and real-time analytics, making it a strong portfolio project for data engineering roles.